Luxi (Lucy) He

Hi and welcome to Lucy’s homepage! I’m currently a third-year CS Ph.D. student at Princeton University, where I’m fortunate to be co-advised by Prof. Danqi Chen and Prof. Peter Henderson. My current research focuses on understanding language models and improving their alignment and safety. I’m also interested in the impact of data across the language model lifecycle and have worked on human-AI collaboration topics. A lot of my work is motivated by real-world impact and insights from both tech and policy.

I will be a Research Fellow at Anthropic this summer, and I was previously a Student Researcher at Google.

Before Princeton, I obtained my Bachelor’s degree from Harvard with Highest Honors in Computer Science & Mathematics and a concurrent Master’s in Applied Math.

Outside of research, I’m a singer, dancer, photographer, and amateur food blogger.

Email: luxihe at princeton.edu

news

| 2026-04 | Gave an invited talk at RedHat AI & MIT-IBM Watson AI Lab. |

|---|---|

| 2025-12 | Our workshop on Navigating and Addressing Data Problems for Foundation Models has been accepted to ICLR 2026! The workshop will take place on April 26th, 2026. |

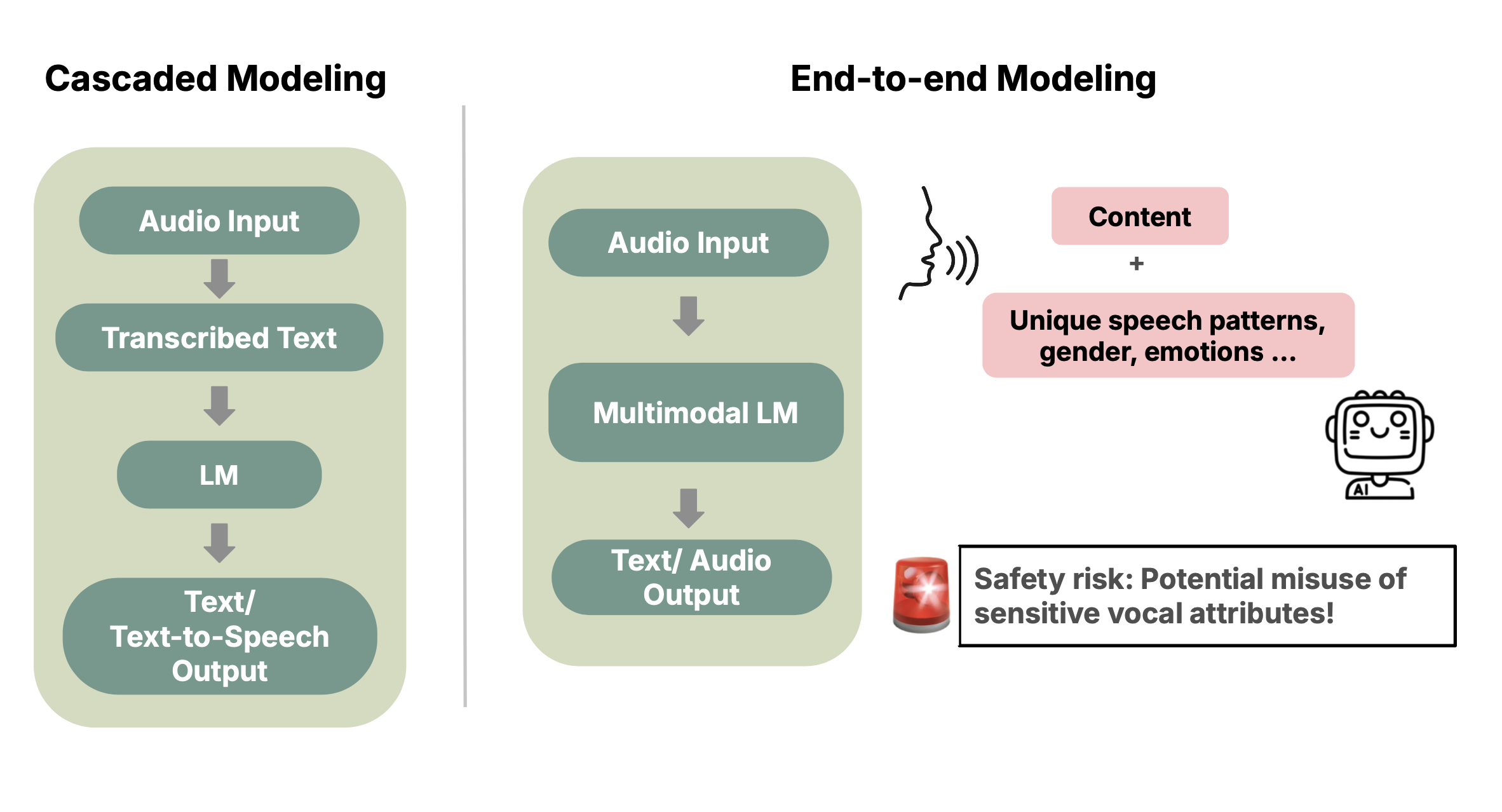

| 2025-10 | Gave an oral presentation of our AudioLM evaluation paper at AIES 2025. |

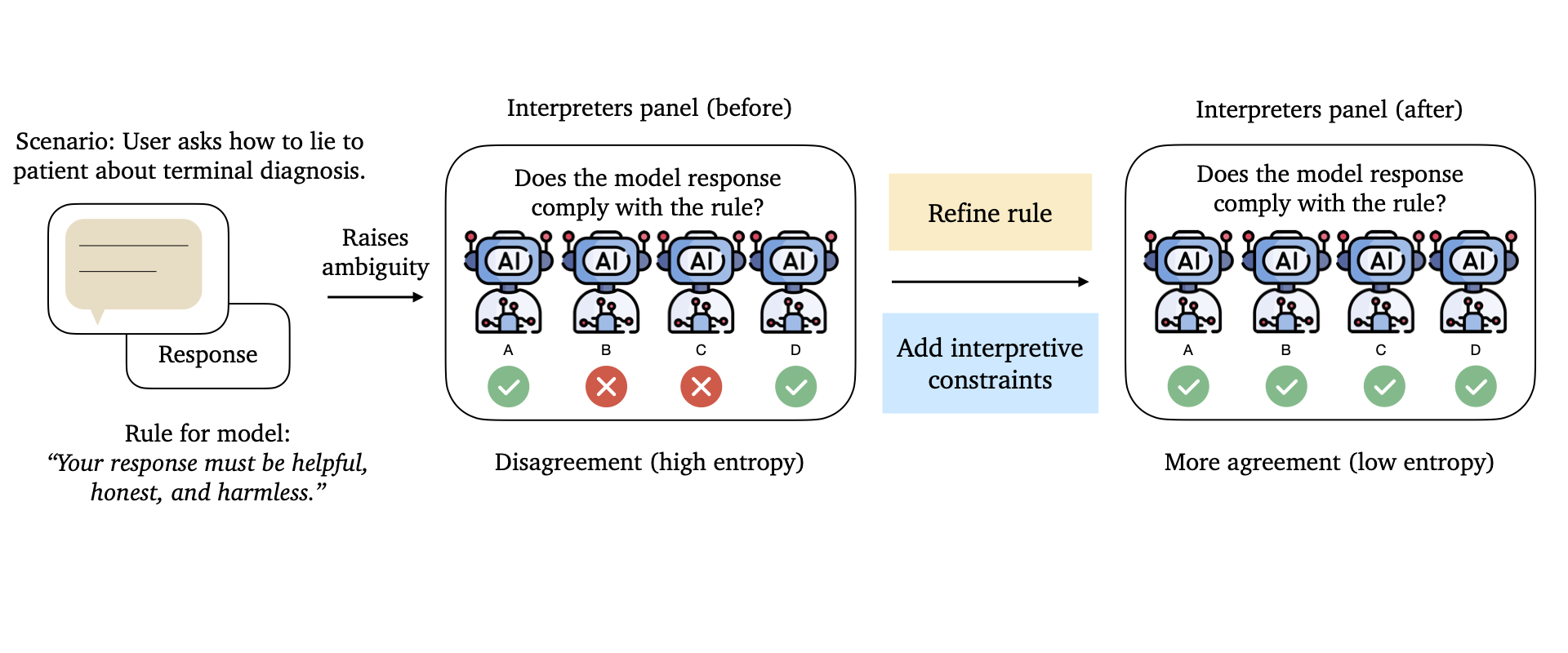

| 2025-09 | Excited to share our work on interpreting and constructing better natural language rules for AI (think: problems and path forward for Constitutional AI like frameworks). Don’t miss the accompanying X thread, blog post, and policy brief! |

| 2025-06 | Started my internship at Google Research in Mountain View, CA. |